|

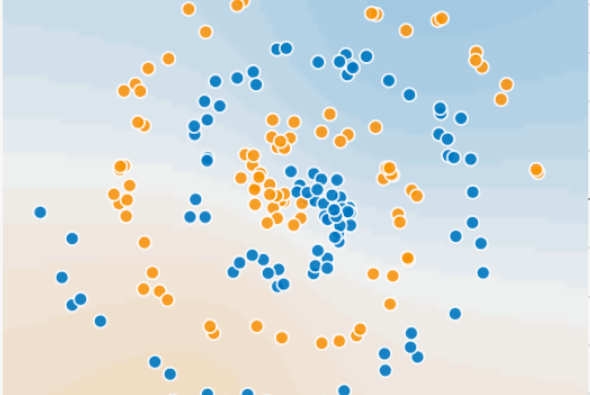

| Credit:Daniel Smilkov and Shan Carter |

Basic Structure/Function of Neural Networks

Computer scientist Yoshua Bengio, in a recent Scientific American article entitled `Springtime for AI – The Rise of Deep Learning, has explained why complex neural networks tend to be vital to true artificial intelligence since people have long imagined it. It tends to be reasonable that the way to make computers equally smart like the humans is to program them to performlike human brains. But considering how little we tend to know how the brain seem to function, the task looks to be more than a little overwhelming.

This conception by Jen Christiansen tends to clarify the basic structure as well as the function of neural networks. These so-called `hidden layers’, tend to play a main role in breaking down visual components in decoding the image as a whole. It is a known fact that there is an order to how the layers tend to act from input to output, each layer seem to handle progressively complex information.

However, beyond this the hidden layers, as their name recommends tend to be masked in mystery. Daniel Smilkov and Shan Carter created a neural network playground, as part of a recent collective project known as Tensor Flow, which targets in elucidating the hidden layers by enabling users to interact as well as experiment with them.

Tensor Flow – Open Source Tool for Neural Networks

There seems to be a lot going on in visualization and Fernanda Viegas and Martin Wattenberg, who is part of the team behind Tensor Flow, a much more complex and an open source tool in using neural networks in real-world application had explained some of it in their keynote talk at the OpenVisConf. Instead of something as complicated as faces, the neural network playground tends to utilise blue and orange points dispersed within a field to teach the machine how to find and echo patterns.

Users have the option of selecting various dot-arrangements of various degrees of complexity and deploy the learning system with the addition of new hidden layers and new neurons in each layer. Every time the user tends to use the `play’ button, they can observe as the background colour ascent shifts to approximate the arrangement of blue and orange dots. As the pattern seem to get more complex, extra neurons and layers support the machine in ending the task effectively.

Expressive Features – Connections among Neurons

In addition to the neuron layers, the machine also has other expressive features like the connections among the neurons. The connection seems either blue or orange lines, blue which is positive, where the output for each neuron is the same as its content while orange being negative, means the output is the opposite of each neuron’s values.

Moreover, the thickness as well as the opacity of the connection lines specifies the confidence of the prediction that each neuron tends to make, just like the connections in our brain that strengthens as we progress through a learning procedure. But interestingly as we tend to improve at building neural networks for machines, we may end up disclosing information on how our own brains tend to function.

Visualizing as well as playing with the hidden layers seems like a wayof simplifying this process and also in making the perception of deep learning available to a broader audience.

No comments:

Post a Comment